GestOnHMD

GestOnHMD: Enabling Gesture-based Interaction on the Surface of Low-cost VR Head-Mounted Display

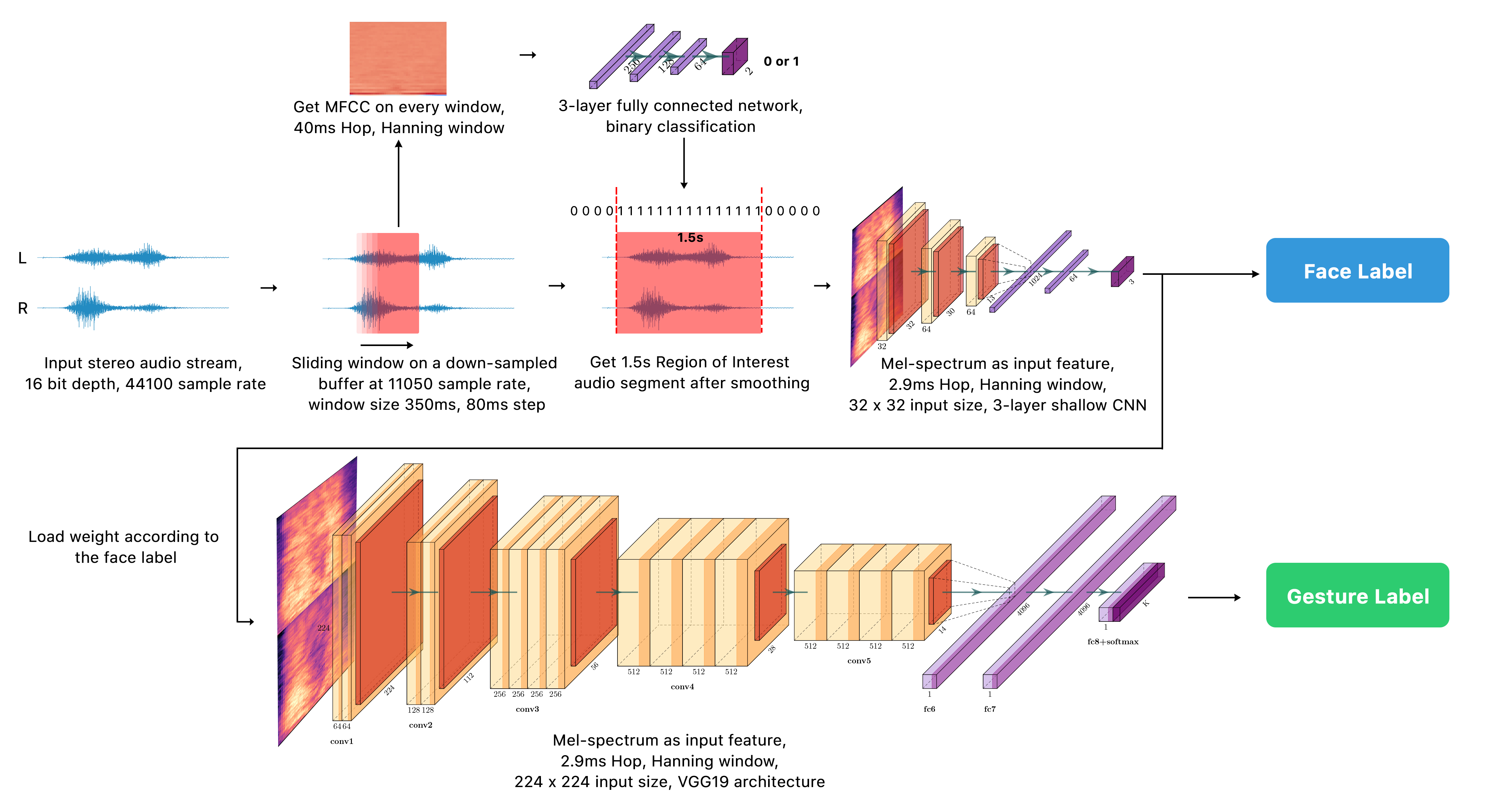

Low-cost virtual-reality (VR) head-mounted displays (HMDs) with the integration of smartphones have brought the immersive VR to the masses, and increased the ubiquity of VR. However, these systems are often limited by their poor interactivity. In this paper, we present GestOnHMD, a gesture-based interaction technique and a gesture-classification pipeline that leverages the stereo microphones in a commodity smartphone to detect the tapping and the scratching gestures on the front, the left, and the right surfaces on a mobile VR headset. Taking the Google Cardboard as our focused headset, we first conducted a gesture-elicitation study to generate 150 user-defined gestures with 50 on each surface. We then selected 15, 9, and 9 gestures for the front, the left, and the right surfaces respectively based on user preferences and signal detectability. We constructed a data set containing the acoustic signals of 18 users performing these on-surface gestures, and trained the deep-learning classification models for gesture detection and recognition. The three-step pipeline of GestOnHMD achieved an overall accuracy of 98.2% for gesture detection, 98.2% for surface recognition, and 97.7% for gesture recognition. Lastly, with the real-time demonstration of GestOnHMD, we conducted a series of online participatory-design sessions to collect a set of user-defined gesture-referent mappings that could potentially benefit from GestOnHMD.

Authors: Taizhou Chen, Lantian Xu, Xianshan Xu, and Kening Zhu

Code and Dataset at: https://github.com/taizhouchen/GestOnHMD