About MEI Lab

Multimodal and Embodied Interaction (MEI) Laboratory is a Human-Computer-Interaction research group in the School of Creative Media, City University of Hong Kong.

MEI, 美 in Chinese, means beautiful and elegant. In MEI lab, we aim to create, innovate, and enhance the beauty in human-computer interaction through multimodal and emobided interfaces.

MEI also resembles Magical Experiences and Interfaces. Therefore, with Multimodal and Embodied Interaction, we also aim to provide a magic experiences in digital media. This vision echoes with the famous quote by Arthur C. Clarke, "Any sufficiently advanced technology is indistinguishable from magic."

While the computer interfaces today are mainly visual and auditory, in MEI, we further envision a “touchable” future for our society. Our research interests include tangible user interfaces, wearable user interfaces, mobile user interfaces, virtual and augmented reality, and the application of these interfaces/technologies in education, entertainment, accessibility, and so on.

Latest News

Please refer to our projects and publications page for more details.

Award

We received a couple of awards in the 10th "Cross-Strait Emerging Design Competition · Huacan Award". Congrats to Lina, Haichen, and the team!

Paper Publication

Our paper "TesselTex: Modulating Tactile Experience of Texture Roughness on Actuated Surfaces Composed of Tessellated Everyday Materials" was accepted by PACMHCI ISS track / ISS 2026. Congrats to Xingyu and the team!

Paper Publication

Our paper "ProXeek: Seeking and Leveraging Real-World Objects and Environments as Haptic Proxies for Virtual Reality through Multimodal Reasoning" was accepted by SIGGRAPH 2026 as a journal-track (ACM Transactions on Graphics - TOG) technical paper. Congrats to Haichen and the team!

Paper Publications

Our lab got three full papers accepted by CHI 2026! Two home-brew (LiqMetCraft and Bondi) and One co-authored (AnkleType). Congrats to the team!

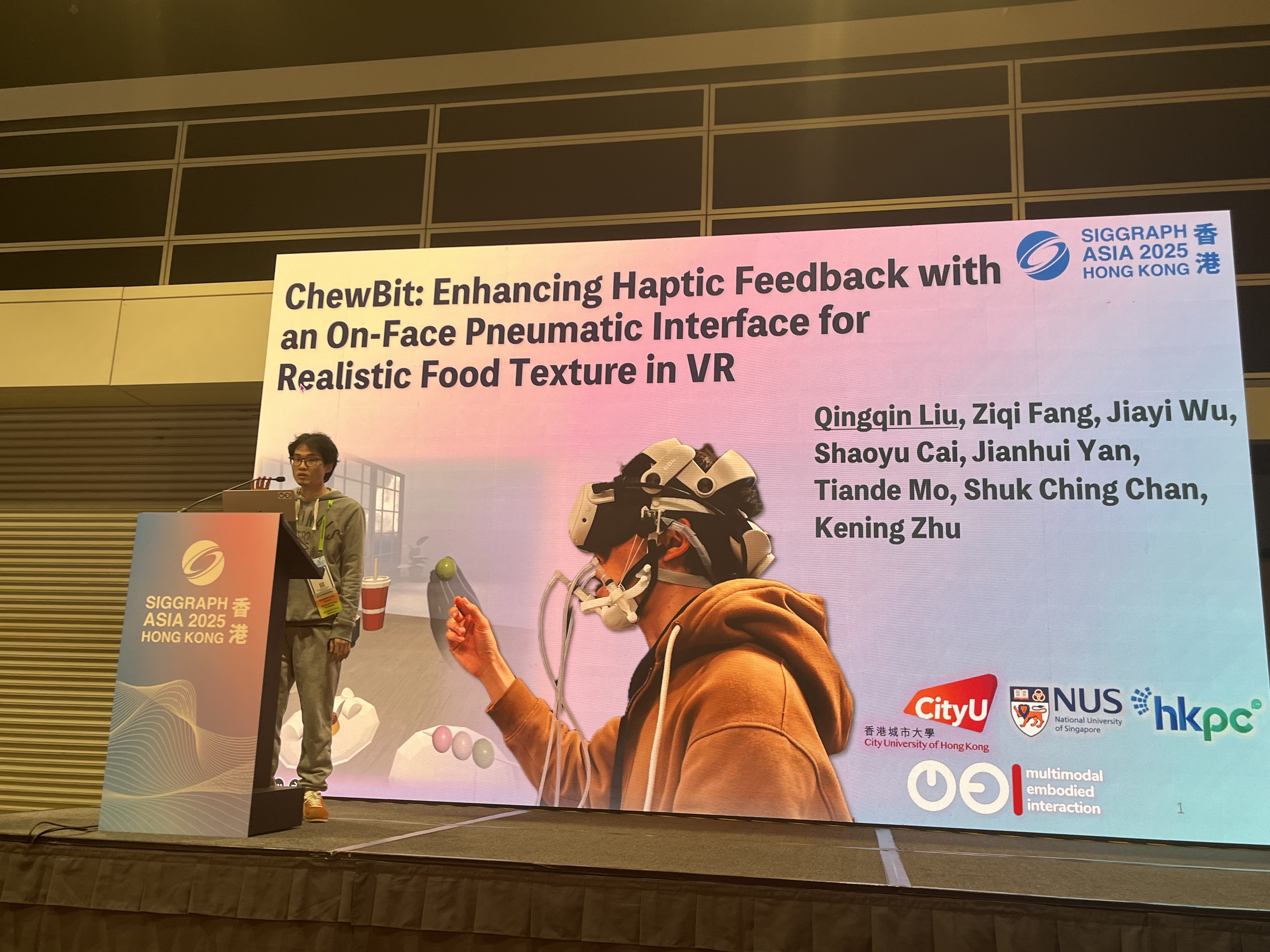

Demo and Awards

ChewBit (VirChew Reality) was demonstrated in in the Emerging Technologies program, SIGGRAPH Asia 2025, and received the Audience Choice Award. Congrats to the team!

Awards

Congrats to Haichen for Research Tuition Scholarship, and Qingqin and Zhangqi for Outstanding Academic Performance Award.

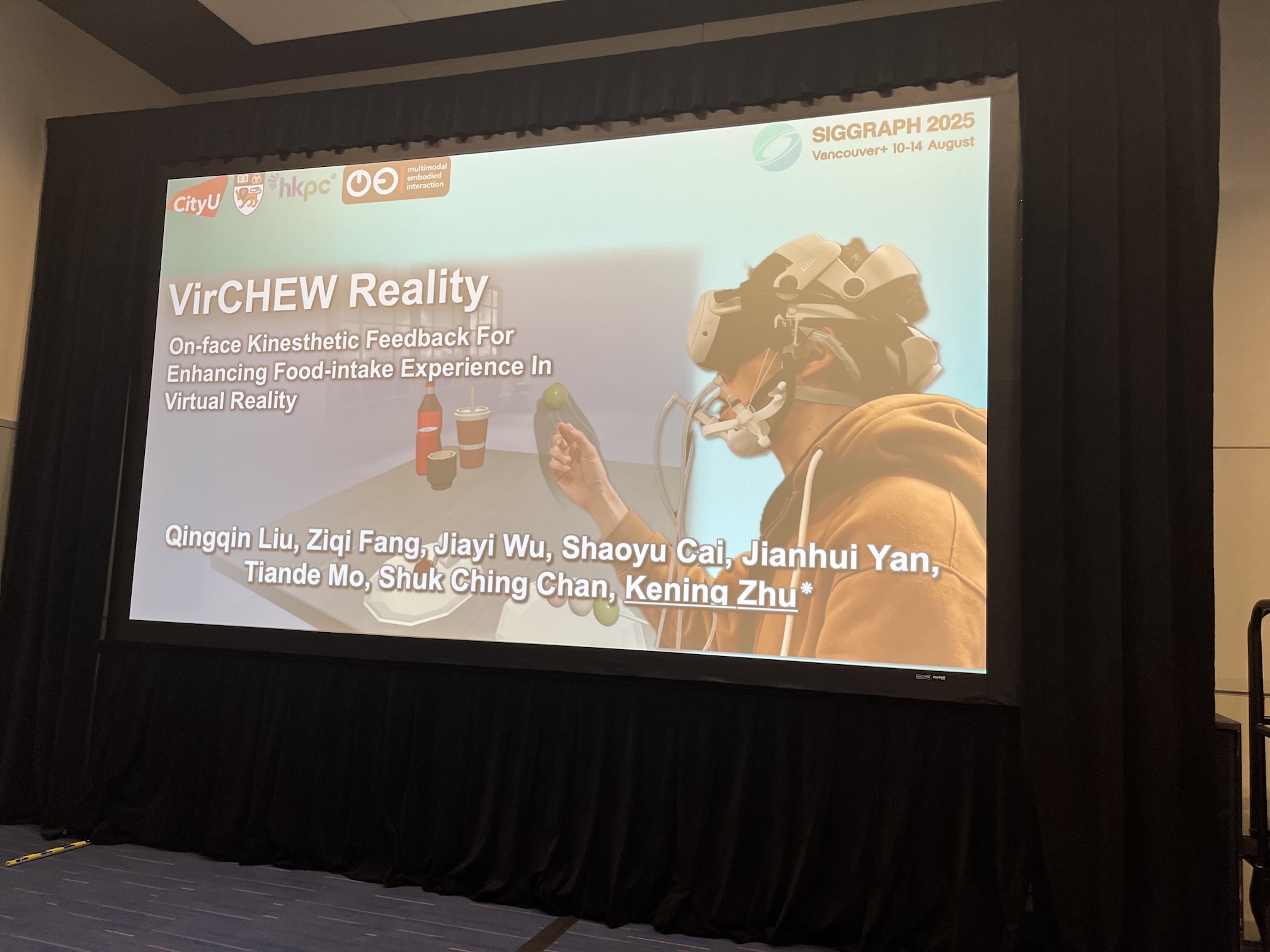

Paper Presentation and Award

We presented VirCHEW Reality in SIGGRAPH 2025, and our paper was voted as "Top 10 Technical Papers Fast Forward From SIGGRAPH 2025" (we may rank 2nd :)). Congrats to Qingqin and the team!

Award

ThermOuch received Silver Medal in the 50th International Exhibition of Inventions of Geneva (IEIG). Congrats to Haichen and the team!

More previous news here.